Parameter-efficient fine-tuning of large-scale pre-trained language models | Nature Machine Intelligence

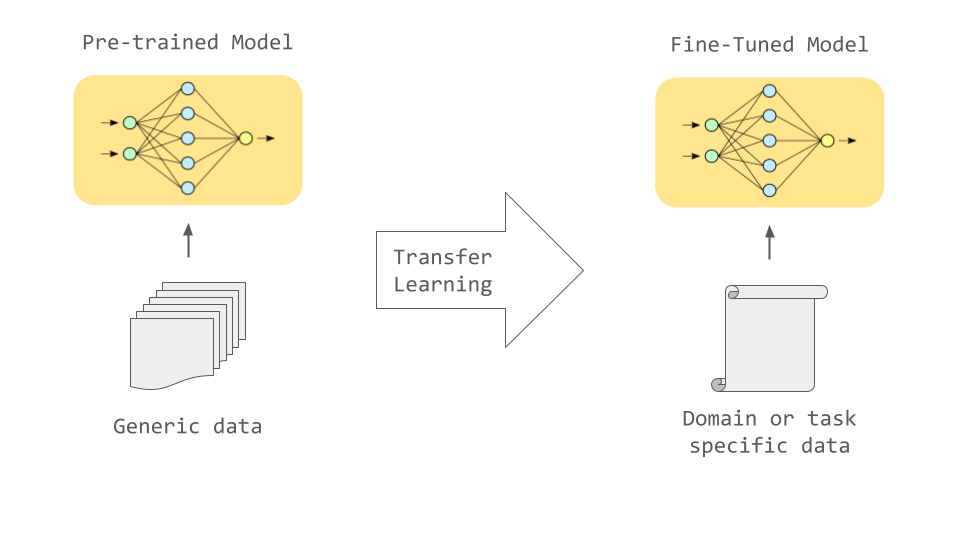

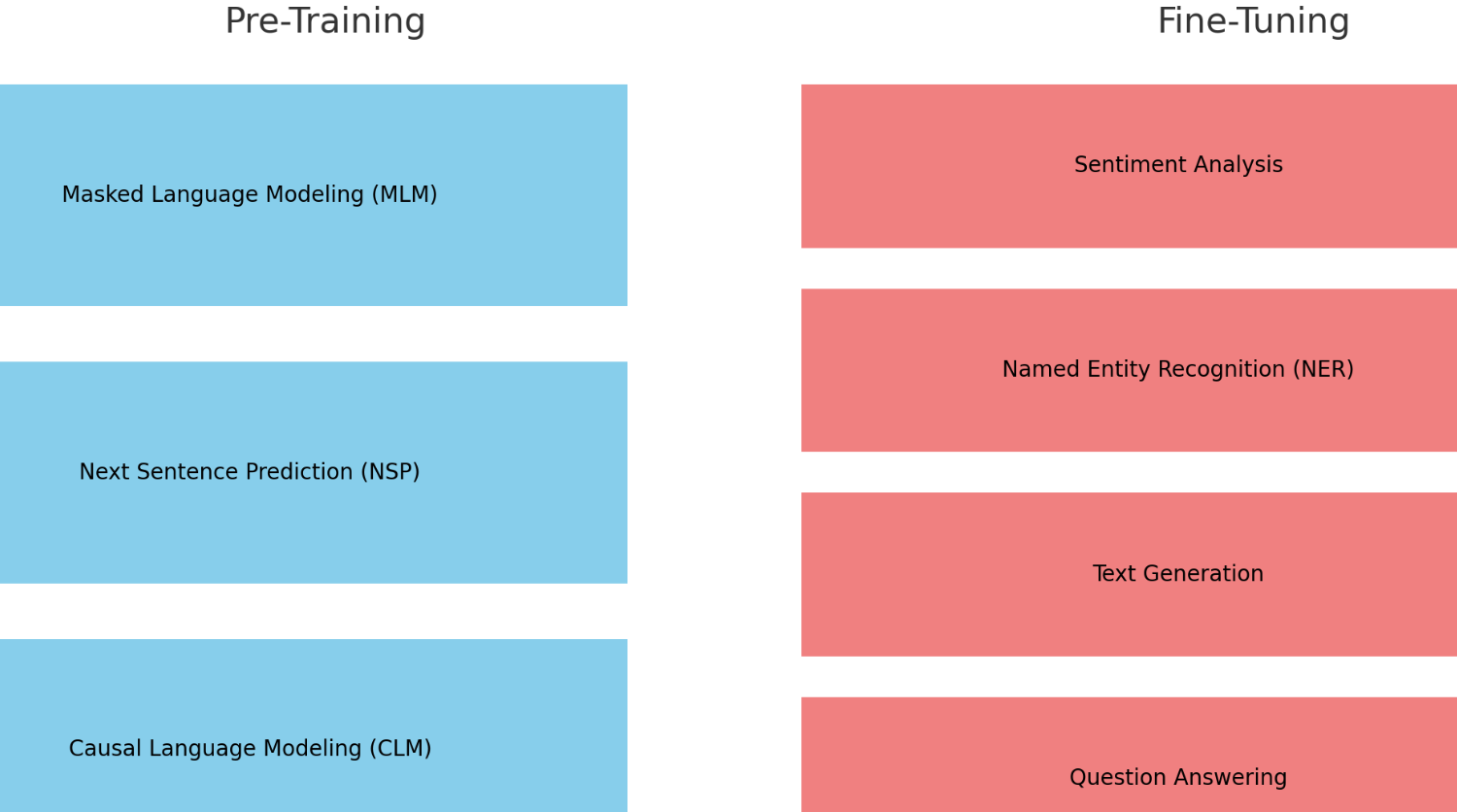

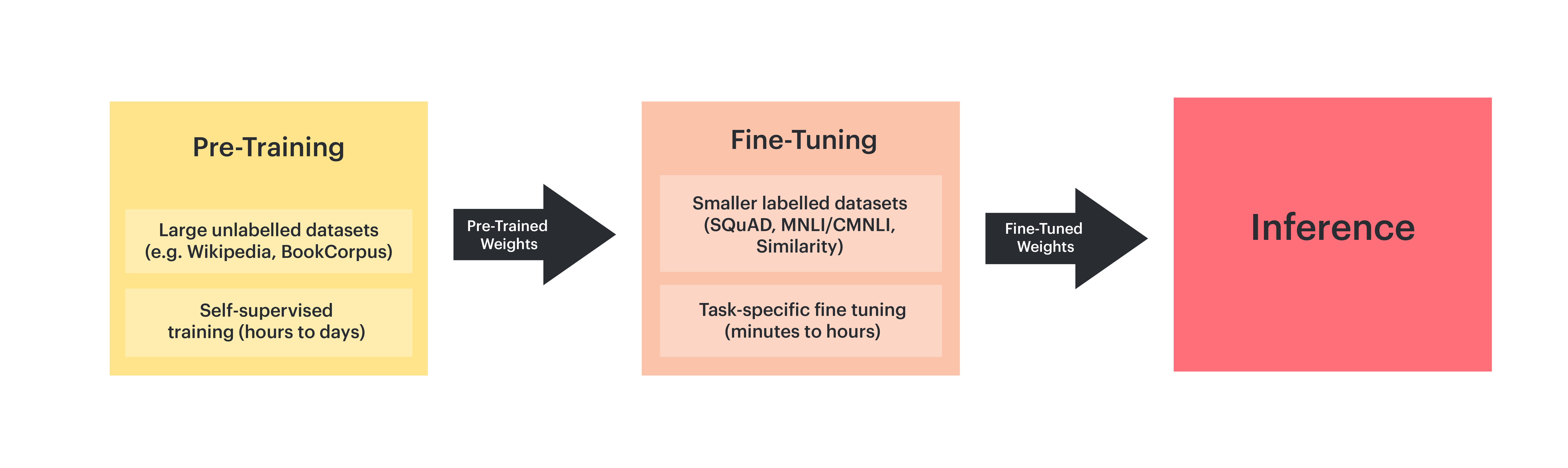

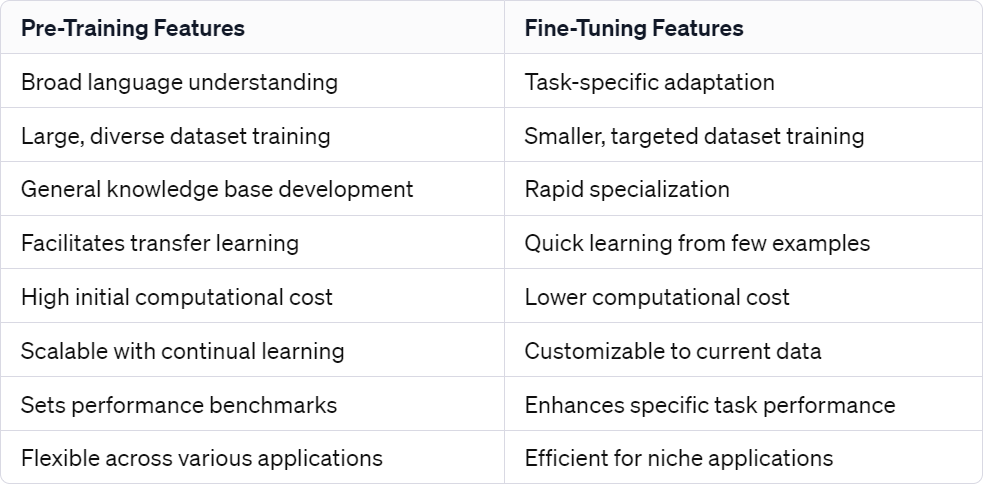

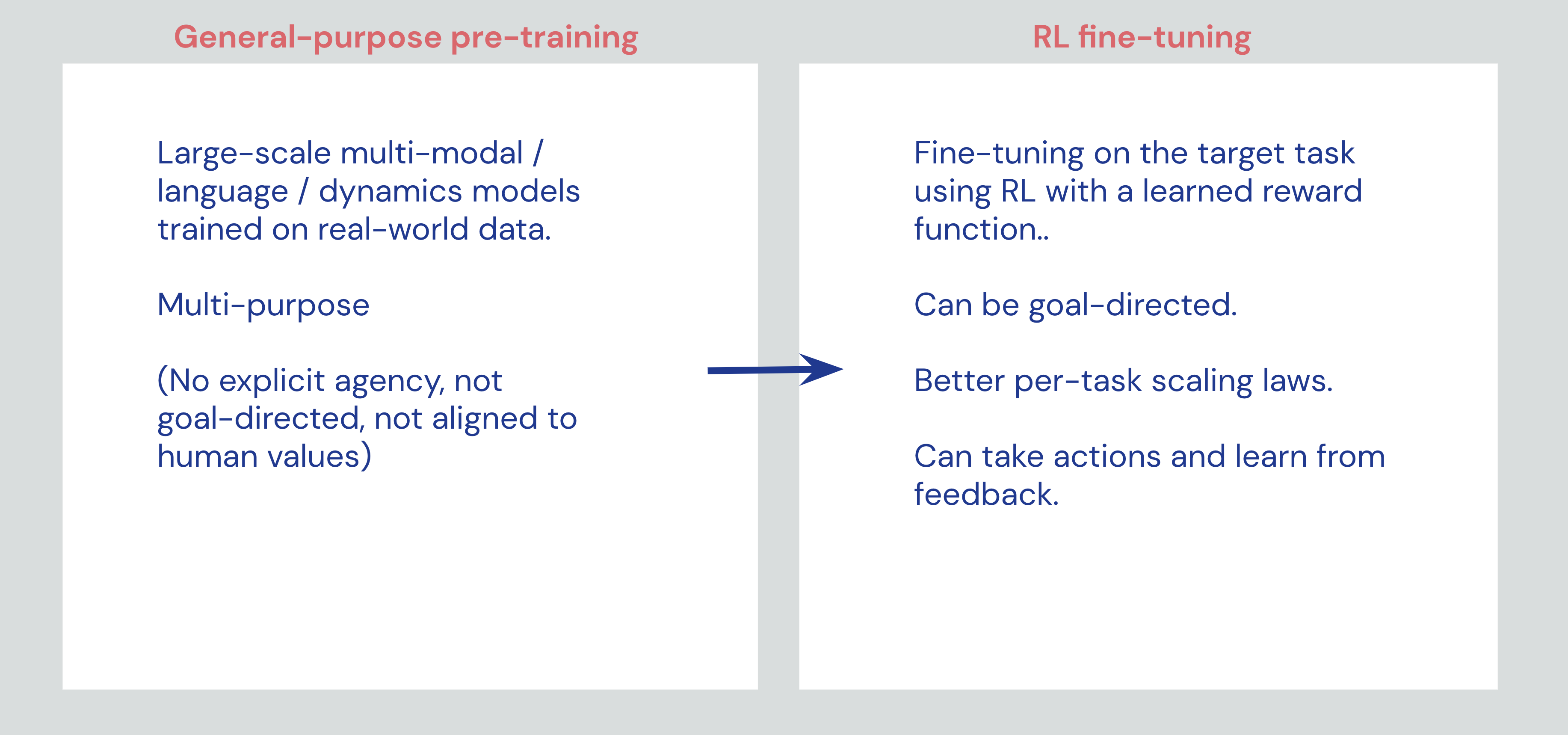

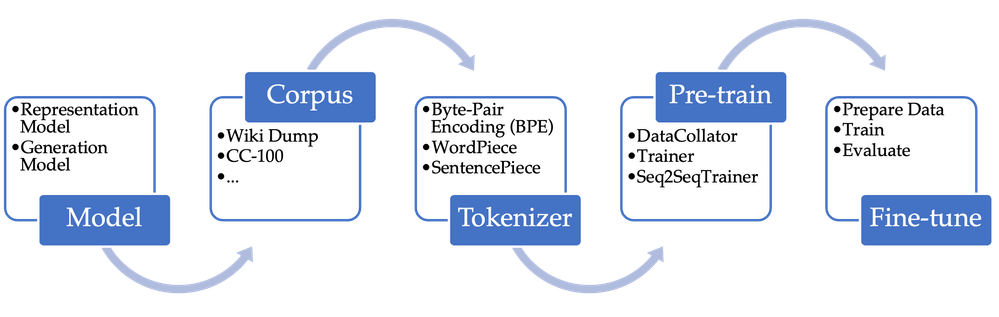

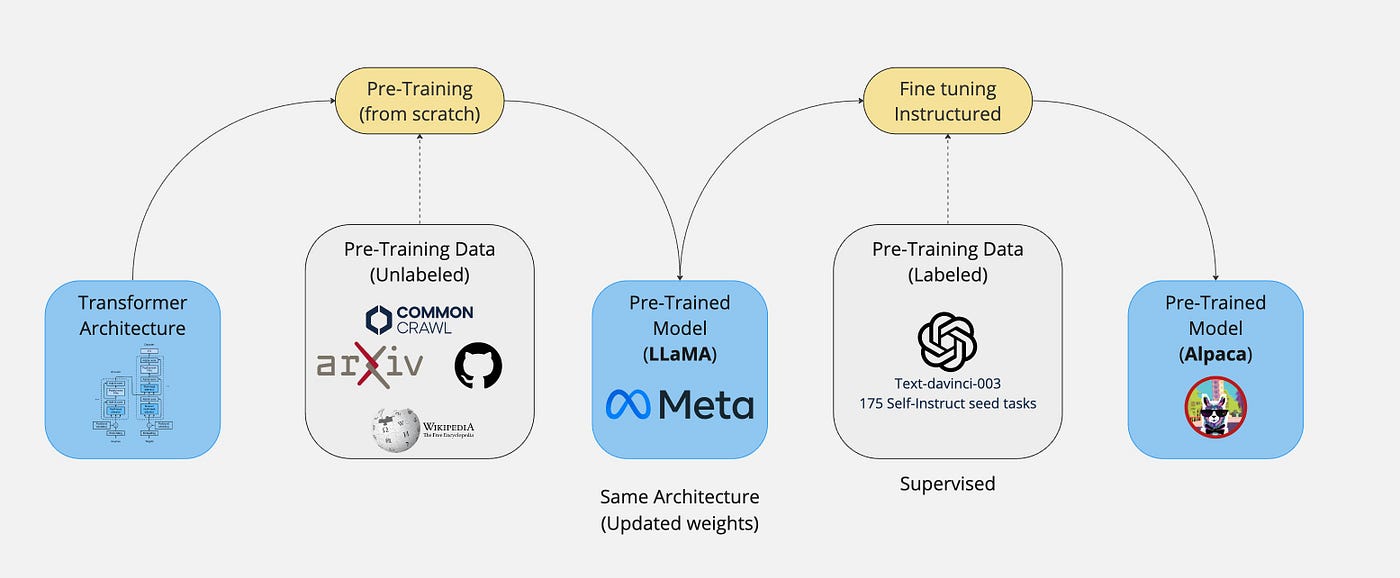

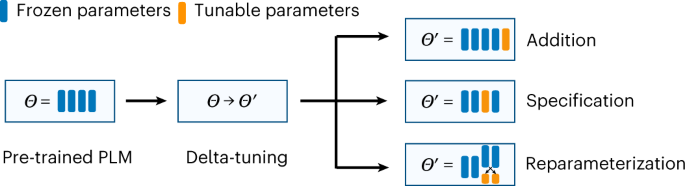

From Fundamentals to Expertise: The Professional Route of Pre-Training to Fine-Tuning in Language Models

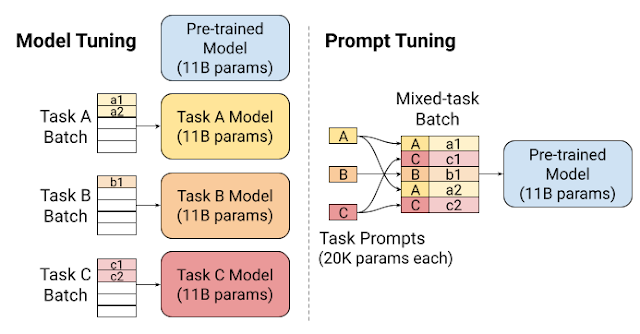

Can prompt engineering methods surpass fine-tuning performance with pre-trained large language models? | by lucalila | Medium

LED melek gözler Tuning BMW 5 serisi için E60 E61 Pre LCI far 520i 530i 540i 550i 525i 545i Halo yüzük seti DRL aksesuarları

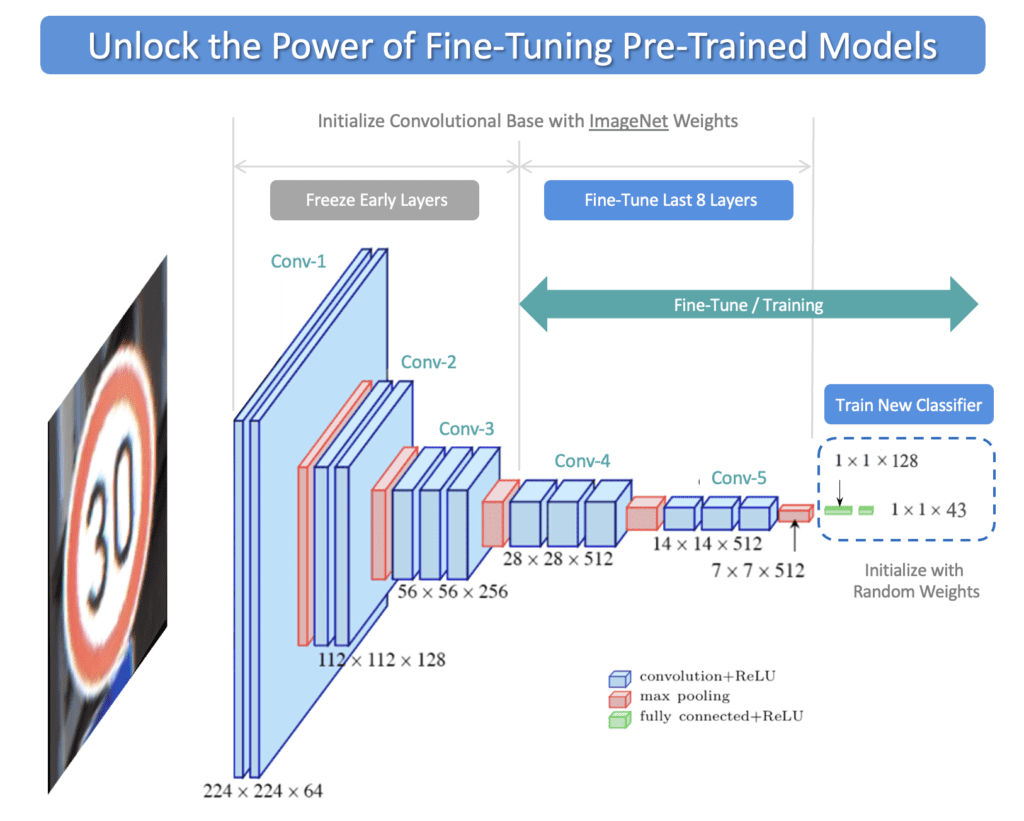

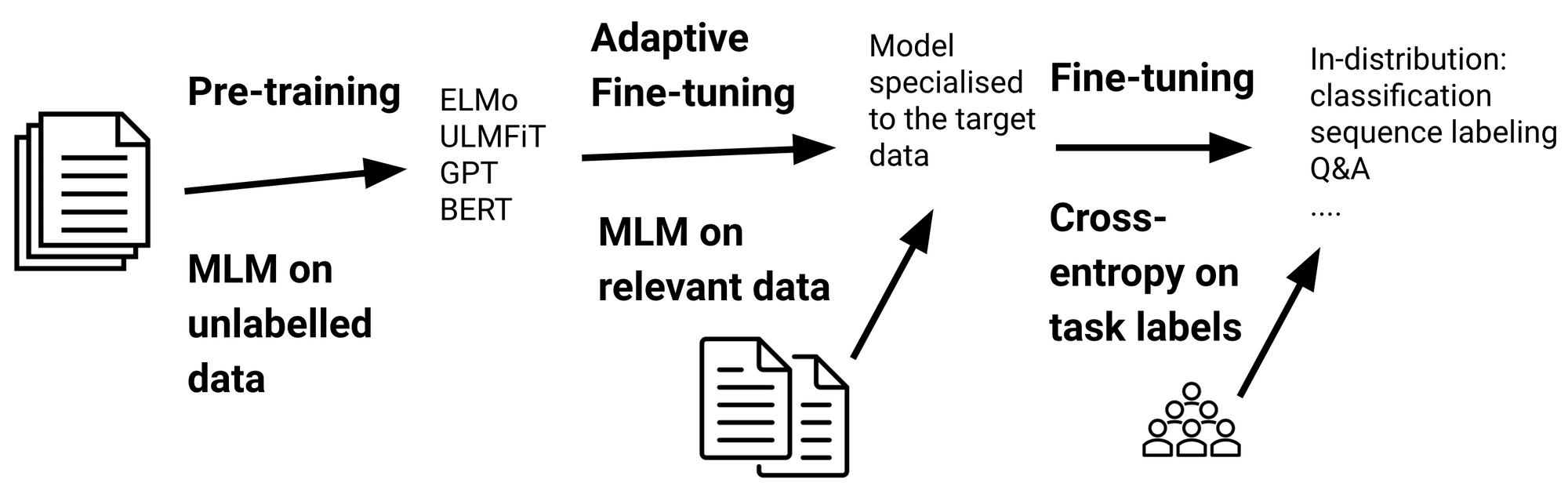

Pre-training and fine-tuning paradigm: full fine-tuning and frozen and... | Download Scientific Diagram

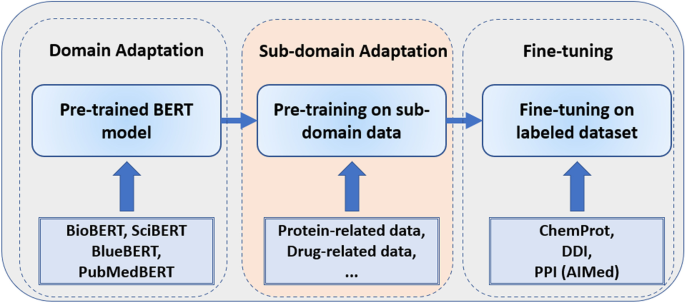

Investigation of improving the pre-training and fine-tuning of BERT model for biomedical relation extraction | BMC Bioinformatics | Full Text

Diagram for different pre-training and fine-tuning setups. (a) Common... | Download Scientific Diagram

This AI Paper from CMU and Meta AI Unveils Pre-Instruction-Tuning (PIT): A Game-Changer for Training Language Models on Factual Knowledge - MarkTechPost

🇹🇷 Tuning Garage Since 1984 🇹🇷 BMW E90 3 Serisi 2007 ✔️ M3 Body Kit ✔️ E60 M5 Jant ✔️ Lastik Seti ✔️Akrapo... | Instagram